Project Description

HUMAINE is a platform that supports multilateral negotiations among humans and agents in an immersive environment, in which the agents are represented by avatars that can see and hear human negotiators, and speak to them in a synthesized voice. The original impetus for HUMAINE was to use AI to help teach the fine art of haggling to students taking a class in Mandarin language and culture at Rensselaer Polytechnic Institute. It has evolved into a general negotiation platform that will be the basis for a new ANAC (Automated Negotiating Agents Competition) league that will hold its first competition (HUMAINE 2020) at IJCAI-PRICAI in Yokohama in July 2020.

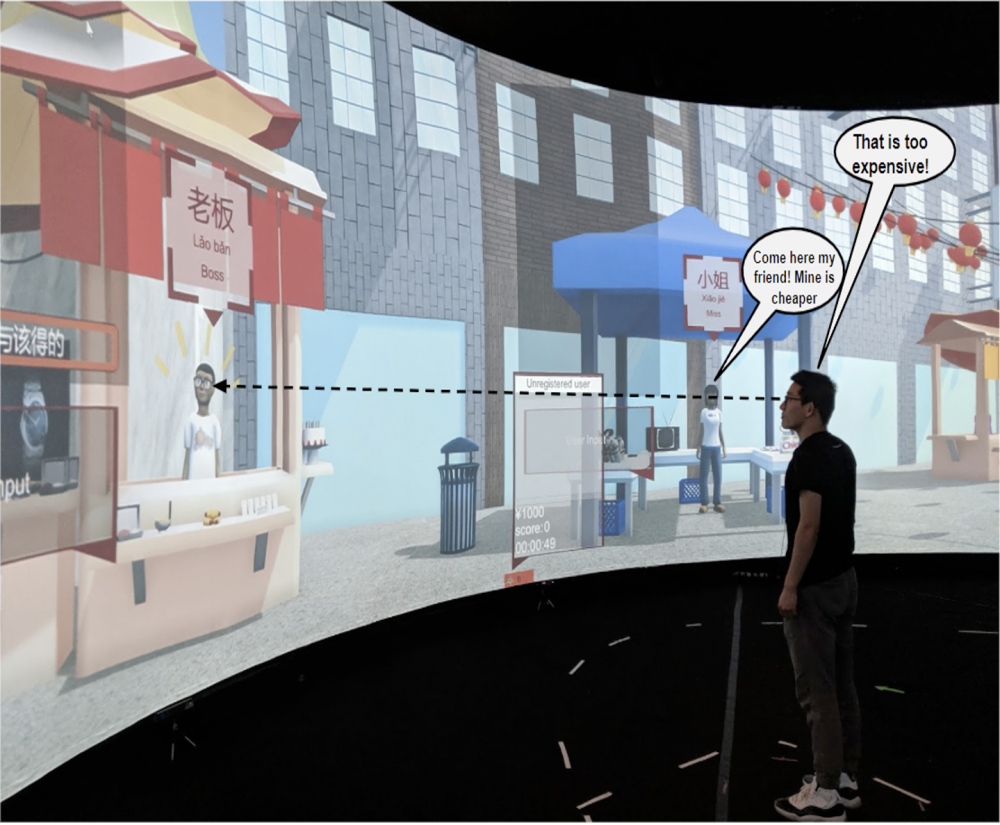

HUMAINE is distinguished from other agent negotiation research efforts in at least two ways. First, it involves multilateral negotiations that reflect certain real-life situations, such as an individual buyer haggling with two street vendors. Even in this simple three-agent case, such interactions can be complex and strongly coupled. Not only is the buyer engaged in a competition with each vendor, but the vendors are competing with one another both directly and indirectly for the buyer’s business. Successful agent strategies are likely to require a mixture of clever algorithms and clever psychology. Second, HUMAINE is unlike other agent competitions in that it is held in an immersive environment, enabling humans to engage with agents naturally — through speech and gesture rather than text. This more readily facilitates exploring some of the non-algorithmic nuances of negotiation that play an important role in negotiations among humans.

Technical Details

Detailed technical documentation and specifics of the competition rules can be seen here — Link to document

Sample agents and other code can be seen here on GitHub — https://github.com/humaine-anac

Code and document are live, please keep checking the two links for the most updated information

HUMAINE 2020 competition

In the 2020 competition, two competing seller agents will negotiate with a human buyer who wishes to purchase various ingredients from which they can make cakes and pancakes. The buyer will look at one of the avatars and speak to it in English to make an offer. The agent must interpret the utterance, determine an appropriate negotiation act (such as a counteroffer, acceptance, or rejection) in light of the utterance and any other relevant context, and determine how best to render that act into an utterance and an accompanying gesture. The winning agent will be the one that best maximizes its utility across multiple rounds of negotiation with various humans and other agents. An award will also be given to the agent deemed most engaging.

Interested? Here is a link to the HUMAINE 2020 CfP.

Faculty

Research Staff

Students

Publications

2020

- . "HUMAINE: Human Multi-Agent Immersive Negotiation Competition," In Extended Abstracts of the 2020 CHI Conference on Human Factors in Computing Systems [Full Paper] [Publication, Abstract]

2019

- . "Embodied Conversational AI Agents in a Multi-modal Multi-agent Competitive Dialogue," Proceedings of the Twenty-Eighth International Joint Conference on Artificial Intelligence Demos. Pages 6512-6514 [Publication, Abstract]